Running top-of-the-line models ain’t cheap. Burning Opus tokens is expensive.

Yesterday, Anthropic sent out an email to all Claude Pro/Max accounts letting them know they are closing the gates for “third-party harnesses”. That’s a polite way of saying OpenClaw users.

Anthropic launched Claude Code with a fixed monthly price. They wanted developers to think about what they could build next rather than worrying about what that would cost. But from day one, people have come up with ways to maximise those all-you-can-eat buffets. They wrote infinite loops that kept Claude busy. Anthropic introduced session windows. Users then started setting up cron jobs to maximise their usage, which led to weekly limits. An arms race kicked off.

When OpenClaw launched, this arms race started to hurt the vendor. That agentic assistant did a lot of work, and for every heartbeat, it called Claude. Servers got overloaded, performance suffered, and the business case for those Max accounts started to look bleak. The more OpenClaw agents went live, the worse the Claude Code experience got.

Paperclip is another third-party harness. It sets up a company of AI agents. The CEO agent assembles a team of engineering drones and QA bots to take up individual tasks. Most of the tokens are burned by the agents checking whether there is work to do. The rest goes to infighting and ticket hand-off. Only a fraction of the tokens gets allocated to do the actual work.

It creates a situation not unlike the engineering department of a large enterprise. Meeting culture, lots of cooks in the kitchen, an abundance of alignment calls, middle management and communication back-and-forth.

Both Paperclip and OpenClaw are impressive, but extremely wasteful. And that’s a lesson we might want to learn sooner rather than later.

My OpenClaw agent burned through €160 of Opus in a matter of days for doing almost nothing. I handed the development of Daily Doom off to a Paperclip company, only for it to burn through my Codex week allowance just trying to assemble a team.

These are not inefficiencies we can live with.

In the history of software engineering, we have always struggled with limitations. Putting Super Mario Bros on an 8-bit machine forced the designers to develop crazy resource-saving techniques. The early internet days with 56K modems made engineers invent bandwidth-saving protocols.

The same will happen now for intelligent tools.

Agents are great at handling decisions and calling the right tools. But using OpenClaw to give you a daily weather update is wasteful. Most things don’t need autonomy.

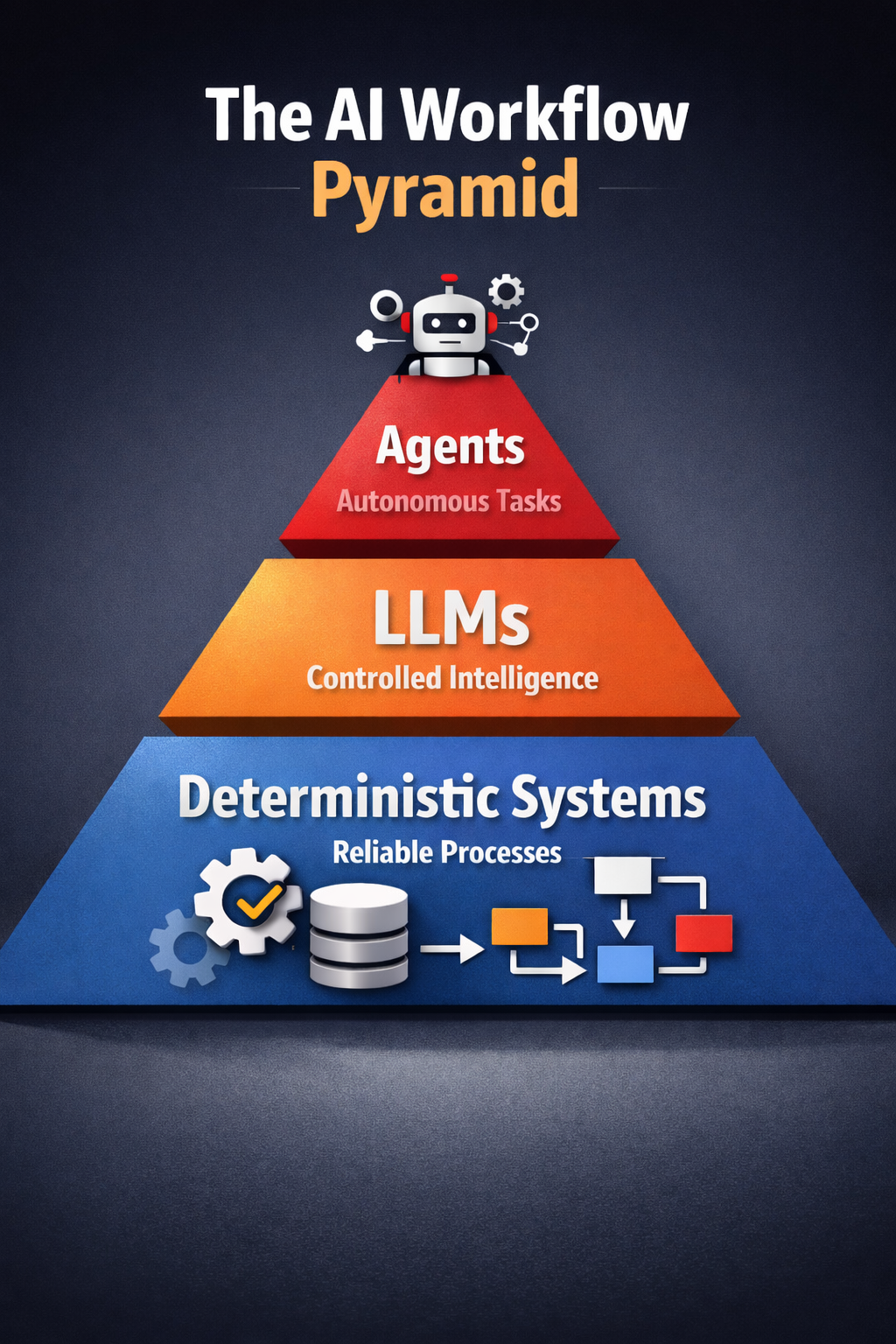

The automation workflows we will see in the coming months will not be fully agentic. They will have cheap, reliable deterministic processes at the base. Some of the work will be offloaded to LLMs. And only a tiny fraction of that work will be agentic.

We will get something that resembles the famous Testing Pyramid for test automation.

Agents will interact with the outside world and be involved in planning. But they will trigger deterministic, bespoke workflows rather than solve problems in an agentic way. Some of these tools will use LLMs. Most of them will be designed by carbon brains.

The virtual assistant of the future will not be a fully agentic OpenClaw. The agent will read your messages as it does today. But it will not use a browser to try to find the right Google Sheet. It will use a deterministic, human-designed workflow that triggers a tool like gws.

The autonomous development process of the future will most likely not involve a self-appointed Scrum Master bot. It will be a deterministic workflow that will trigger a Code Review Agent at the right time.

That means we will most likely see less “magic” where agentic teams assemble themselves to get to an outcome. We will see more workflows that contain agents at the heart but are mostly deterministic.

That’s not as sexy as the agent-first hype.

But it’s cheaper, more reliable and a lot more efficient. Purpose-built, predictable and designed by humans.

That is what matters in automation.